I've started, stalled, and attempted, once more, to write this, far more times than anything else this year. There's much to cover, and in addressing specific points I risk making the current state of matters seem far less of an issue than others have stated - make no mistake here, there's a very real and pressing need to talk about how AI is changing the landscape of writing, so whatever deficiencies currently exist aren't to be seen as a status quo, but battlegrounds which will shift as new developments occur and push-back is made. While there's plenty to laugh at, there's also worrying discrimination present, inherent cultural bias, and a tendency to assert the most ridiculous things as fact.

There is likely a far, far longer piece waiting to be written about this subject, so consider this an apéritif to the forthcoming digital war.

It has been said, by various people, in a number of contexts, that the current state of artificial intelligence is - in most cases, for most people - "good enough." That may very well be the case, but one might also have supposed the president's security measures were "good enough" for his visit to Dallas in 1963, the O-rings on Challenger were "good enough" to take off, and airplane cabin safety was "good enough" throughout the 1990s and early-2000s...

To have something out in the world, effecting change, influencing thought, and establishing facts, one needs to be far, far more concerned than those behind these tools seem to be - while you may get an answer as to the date of a well-known battle, or learn a celebrity's birthday, or be taught how to make pancakes, there are a great many areas in which AI is completely hopeless, and the gaps in AI's understanding is only going to become more apparent with each passing day.

These systems have been unleashed on the world completely absent any real intelligence. There is no guiding thought in what any digital assistant, whatever it's origin, provides. The danger, as always, does not lie in what is presented, but in who is being presented a fictitious stew of anachronisms. A certain subset of internet users, already primed to see conspiracies within every new post, video, tweet, or other offering online, are going to be especially susceptible to accepting all manner of inanity provided. We aren't necessarily looking at any AI, in isolation, as a real danger, but what they might foster in a subset of their users.

I'm not providing "what if's" here, looking into probable futures and picking out nightmare scenarios, but am accurately recounting what has already happened: a New York federal judge has sanctioned lawyers who proffered the court a legal brief created by ChatGPT, replete with citations of fictitious cases, in one of the most significant instances so far. Tendrils of AI's elaborate fictions will squeeze around works produced in colleges and universities, and unaware authors are going to find themselves lured in with promises of a world of facts at hand. It is a tempting lure for the unwary. Already we are seeing the lazy, the gullible, and the foolhardy being harmed by their use of AI.

What comes next might not be so funny, or so inconsequential, or so easily corrected.

This is the beginnings of a duality of history, whereby one strand, that of accurate details - maintained through meticulous record-keeping, research, and printed accounts - will be pitted against wild fabrications created by unaccountable digital forces. These cold, uncaring algorithms, offering people whatever answers rise to the frothy top of their stews, with no care whatsoever for truth, are not our friends, and they are not - despite decades of SF warnings - going to learn from their mistakes. They aren't being trained, they aren't growing intelligent, and they aren't coordinated. We are approaching a point where - no matter how rigorously a work has been researched, and no matter it's citations - digital intelligences can discount what is inconvenient as 'false news' (which seems to be a rallying cry of the simple-minded, in recent years) and we will all lose out.

It is no secret that I have been intensely critical of AI as a whole, and especially in regards to a specific subset of these: those tasked with dissemination of information. I've made my case - repeatedly, and strongly - only to be met with hesitation, and refutations. "It's convenient" is one argument, albeit an easily countered one. I have been told that my stance is counter to what I have said about the benefits of technological advancement, but this is not so simple a case as being 'pro' or 'anti' any advancements.

Yes, I want to live in a world where we have something akin to Star Trek’s miraculous computers, able to answer complex questions with authority, but technology hasn’t advanced to a point where this is possible. We are still, when looking at any of these solutions, in the technology’s infancy.

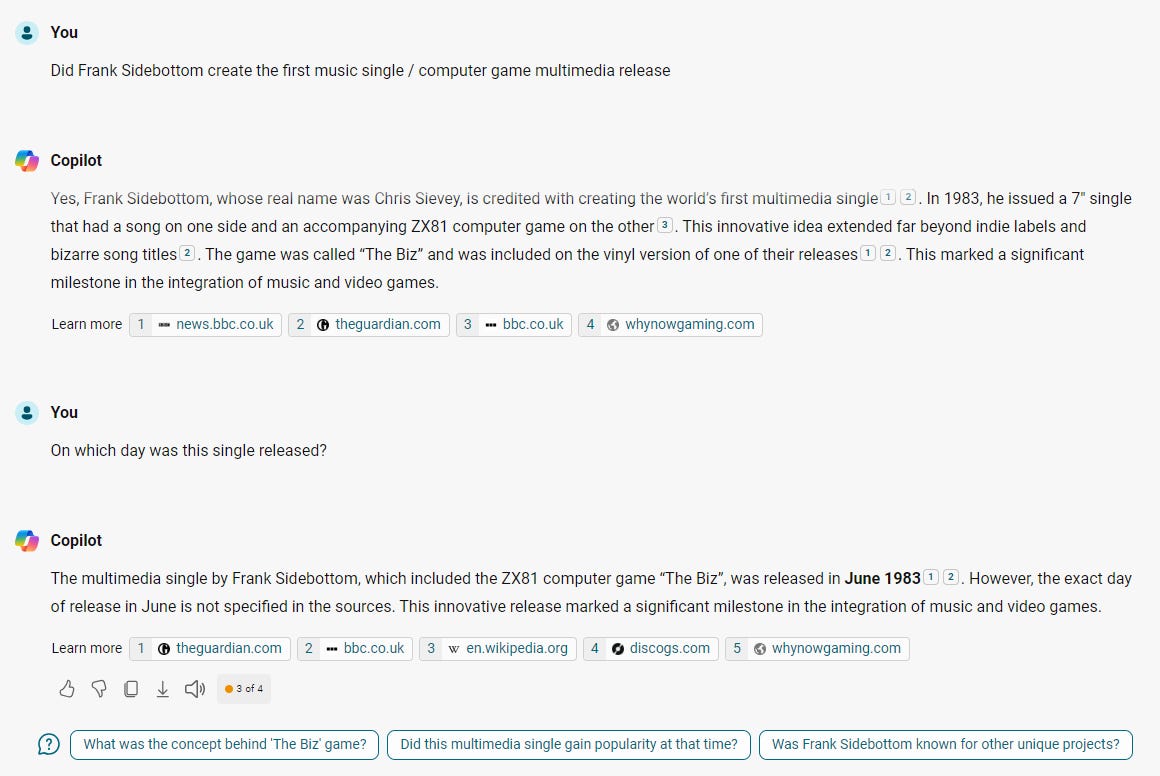

My brief time (a week-and-a-bit) using Copilot (Bing's answer to AI) was approached - on the whole - without any preconceived notion that it would be of use. There's never been an easy, one-size-fits-all answer to uncovering hidden aspects of the past, although I was genuinely hopeful in my initial attempts at securing information. This, unfortunately, quickly turned to disappointment, then frustration, then exasperation... though I'm getting ahead of myself - we'll start at the start, and get to what problems arose in due course.

Everything goes back to me being dismissive of AI as a whole, and the concept of a digital assistant answering questions put to it specifically. Because I've never attempted to work with such a thing, and due to my vocal dislike of such things, there's been a significant "you can't know until you try it" response to any utterance I provide. It's unlikely that such resistance to my valid concerns is going to diminish without me taking steps to show, explicitly, where this technology falls apart, so I agreed to work with it as part of my routine.

I’ll admit that this experiment was mainly to shut people up. If I can prove that I attempted to use Copilot, in the manner it is intended, without artificially pushing it into returning incorrect answers, then there might be some easing of criticism of my dismissive attitude. I tried, across a vast number of questions, to see if what came back was of any use, and if it streamlined any of the work I would have to do regardless. Nothing was added to my workload specifically to trip up Copilot, and I didn’t seek out texts which were guaranteed to prove difficult. Everything I offered up to the program needed to be researched, one way or another.

I set myself the task of getting a handle on how well Copilot understood certain things. In these initial interactions I was careful to use full sentences, properly punctuated, and taking - often painful (literally, with my left arm still aching) - time to ensure that everything was presented in as easy a manner to understand as possible. As I had also set myself the challenge of indexing a selection of documents - as well as taking on a couple of small jobs which I imagined might be done and dusted within a week or so - I took the notion that whatever information I would do a brief search for, needing citations, I would also pass through Copilot. When writing out things for an AI to comprehend, a few unusual things instantly became clear:

Simply writing a name with the word "born" after it, in order to find a date of birth, sometimes resulted in Copilot assuming that the word "born" was part of a person's name.

Using "PC" before a name, to indicate a police officer, tricked Copilot into thinking that this, also, was part of a name. I'm not sure why it can't understand this common usage, and why it has such difficulty in picking apart what is and isn't a proper name, but there were various instances where it got incredibly confused.

Certain awards marked after a name, such as DSO or MBE, caused rather a bit of needless back and forth, and the addition of these was often more trouble than it was worth.

Requests for information on historical individuals of note, no matter how far back I went (mid-eighteenth century individuals who were hung for various crimes, for example) were met with warnings about respecting people's privacy, and that it couldn't simply answer questions as to dates of birth or death.

I did, for a while, attempt to get around some of these to see if it would return a specific account, of a case from 1870s which had caught my eye, but no matter my wording, and irrespective how I presented the question, I couldn't figure a way to get what I wanted from Copilot. It assumes that there is an expectation of privacy for certain individuals, and it took me some time to figure out that it wouldn't - through either coding, or correlations it had created - return anything of use regarding certain names. Having discovered this block, I went looking through records of note and managed to obtain this information (the hard way) within about an hour. It's absolutely maddening that there's so little regard for presenting facts which are available - albeit which require searching - while returning endless reams of explanation about unrelated matters.

And then there were the gaps. I use the word 'gaps' here, as it is regarding knowledge, but a better word might be lacunae, being that we are discussing texts... I'm actually unsure of which is more appropriate, but there are a great many of these, in surprising and perplexing scope. Every so often I would hit upon an event that was on the tip of my tongue, and - rather than using any of the standard resources - went to Copilot in the first instance, only to inevitably be greeted with one of the most annoying sentences to read:

It’s possible that this event was not widely reported or that it’s not easily searchable online.

There has been enough time for me to contemplate Copilot's response, and what it says about the information set available - first, there is obviously something blocking it's access to newspaper reports, as a great many thing should have been simple to piece together with keywords. It's a massive disadvantage to be deprived access to such important resources, especially something like The Times' notices, which carried all manner of important information. Not that I have particularly fond memories of the almost-endless slog through their archives to add in appropriate bits and pieces from Dispatches. The memory of that actually made me shudder... Secondly, and more importantly, it seems to have a weighted importance to US history, with anything regarding “civil war” being disproportionally related to the American civil war.

Even slight misspellings can trip Copilot up. There are several names I went looking for, and these - translated differently across varying print publications - were presented with different spelling by Copilot, assuring me that it was, indeed, the "correct" spelling of these names. For an AI to state that it knows better than some of the best translators is a very boastful and unexpectedly annoying discovery. I’m still unsure of how to feel about this, as it reframes all manner of texts as inherently “incorrect” to users.

In attempting to get Copilot to return "Harold Lloyd" I asked about a Hollywood star who was popularly known by the moniker Winkle. Copilot did it's best to claim that no Hollywood star was commonly known by this name, stating it as absolute fact. Only when the connection to Film Fun was made explicit, and attention drawn to prolonged use of this name in a cover feature, did Copilot concede that this was, actually, a name which Lloyd was known under. Glad that I had accomplished something of worth, correcting this digital upstart, I went about my business, giving no great thought to this interaction.

I would be happy to help you find more information. Please let me know!

I went back and asked again, almost a week after the initial conversation, and received the exact same answers as before, claiming that there has never been a Hollywood star to use this name. This is a system which not only cannot learn, but which will avoid learning, and updating it's information base, no matter what it comes to understand. I can only imagine how utterly frustrating prolonged interaction with such a wilfully stupid AI might actually be. It's a small thing, in the grand scale of artificial intelligence conversations, to note these instances of history being rewritten and presented to an audience as truth, but it matters.

Maybe it only matters to me, but... yes. This is something I'm willing to state is important.

I'll admit to being pleasantly surprised upon asking where to download a selection of books, comics, sheet music, and other material. I half expected, as with certain systems, a complete lack of acknowledgement of the public domain, but it not only sailed through the easy pickings (Dracula, The Three Musketeers, Don Quixote), it understood Ally Sloper's Half Holiday to be in this category as well, and even provided a link to archive.org. It's slightly disappointing that - although Copilot understood that sheet music was a thing - it couldn't locate any of the music I had chosen it to find. While this is mere conjecture, with no proof available, I would hazard that it might be that Copilot can't actually parse sheet music at all.

There goes my plans for covering music hall tunes…

It still insisted, when dealing with certain mid-nineteenth century authors, that copyright might yet be invoked, though I cannot fathom how any 1850s text might possibly be considered to have any rights whatsoever attached. For works in languages other than English I suppose translations might be accounted for, though contemporaneous translations exist for all major works throughout this era. Although I’m still compiling the list of Victorian works missing from online libraries I resisted the temptation to get Copilot involved in the search for these, as that would have been cheating - I only included it in things which I had cause to search for in the course of my existing workload.

I'm open to suggestion on why there's such difficulty in dealing with music, but it struck me as curious.

In several specific instances it completely ignored the question posed to it, and ignored spelling in order to return irrelevant - and often extensive - diatribes on quasi-related subjects that were not asked for. The most notable instance of this was when it ignored the spelling of a singer's name to provide a history lesson about... something. I stopped it responding in order to demand that it acknowledge the specific spelling I had provided, at which point it apparently got stroppy and insisted that I was referring to an unrelated group from earlier in the decade, who recorded in a different genre entirely, folk music rather than pop. It conflated musicians born in the forties with ones popular in the eighties, and stated that a record from 1964 was recorded by a duo born in the latter part of that decade.

Then there was the most infuriating exchange concerning Jerry Dove, noted for his part in the 1962 Mendenhall Glacier incident. Copilot insisted that this is the same individual as an FBI agent murdered in a 1986 shootout, even claiming - when I pushed back against this - that the mountaineer's real birthdate must have been in 1962, making him under a year old at the time of the incident. I do not understand how such a broken system can be permitted to make these statements…

At certain points it seemed that my interactions were being deliberately pushed aside, and answers I requested were being somewhat ignored. If I hadn't satisfied myself that nothing I did could change the fundamental nature of the software, I would have sworn it was annoyed with me...

These kinds of errors cropped up repeatedly, across decades and nations, with conflated individuals and events being treated as singular entities no matter how painfully obvious contradictions showed themselves. Before I discovered the futility of correcting the AI, I actually spent several hours attempting to effect change. If people are to have a digital companion at their fingertips, whom they will - presumably - be taking their cues from, then should that not be held to the highest possible standards? Or are we to accept that change will come regardless, and so permit these falsehoods to be entered into the historical record?

Where it didn't outright lie to me, it feigned ignorance of well-known events.

It’s important to note that historical records can sometimes be incomplete or vary based on the source. If you have more details or a specific source for this account, I could help look into it further.

The way Copilot words things might persuade you that there is a back and forth here, but there is not. You can have absolutely no influence on this black box of technology, which sloughs off any attempt at improvement with the disdain of a Parisian fashion plate who has stepped in dog shit.

Nothing you do matters here.

Nothing you provide will, in any way, improve this resource.

There's an baffling inconsistency to everything that I have experienced, with numerous headache-inducing, pointless and inexplicable dead ends presented - most exasperating of all when dealing with Crimean war participants, with Copilot refusing to assist in running down certain links between notable figures. I'm going to spend some time thinking on whether it is worth extending this experiment so as to cover slightly less exotic ground - it may be that there's less friction when dealing with, perhaps, seventies television, or literature from earlier in the twentieth century, but I cannot imagine that any interaction is going to be completely without issue.

I’ve still got numerous names to find birthdates for, and a great many bibliographies to put together, but I cannot bear using Copilot any longer. It slows down my work, returns irrelevant answers, and generally makes everything so much more difficult than it needs to be. Even with my reduced capacity of late, I’m still able to work faster unhindered by AI than if I was to run things past it. I can’t imagine how anyone would consider there to be a place for AI in research when it is obviously incapable of parsing basic information presented to it.

It is terrifying to think that there are people relying on this for their jobs, and I am baffled as to how some of it's completely fabricated correlations have not been addressed.